|

the advantages of infrared thermometry play an important role in thermal management. consider the need to measure the temperature of an object without disturbing it. contact might damage or destroy the object, change its temperature by altering its heat transfer characteristic, or cause contamination. the object might be moving or inaccessible. these are prime conditions for using infrared temperature measurement, a non-invasive process, but one that must be done correctly.

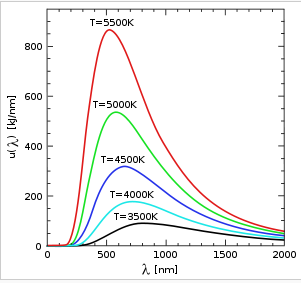

all objects with temperature above -273oc emit radiant energy proportional to the fourth power of their absolute temperature. figure 1 shows the amount of radiation emitted at different temperatures as a function of wavelength. the area under the curves shows the amount of energy. at lower temperatures, most radiated energy is in the ir region. as the temperature increases, the wavelength corresponding to peak radiation moves to smaller values toward the visible region [1].

figure 1. distribution of energies as a function of wavelength for blackbodies [1].

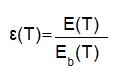

emissivity is defined as the ratio of thermal energy emitted by a graybody to that of a blackbody at the same temperature. a graybody is defined as an object that has the same spectral emissivity at every wavelength [2]. it is defined as:

where,

e(t) = radiation from a graybody body at temperature t

eb(t)= radiation from a blackbody at temperature t

? = emissivity

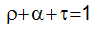

conservation of energy states that the sum of the three coefficients of absorbtivity, reflectivity and transmissivity should be equal to 1.

where:

according to kirchhoff’s law, the emissivity of a graybody surface is equal to its absorbtivity:.

most objects of interest for temperature measurement are opaque, therefore

but shiny surfaces like glass, plastic and silicon have transmission different than zero. for example, glass is opaque at 5 μm. in these circumstances, one can use filters to measure these objects in their opaque ir regions [3].

the emissivity of a metal depends on wavelength, temperature and surface condition. because metals often reflect, they tend to have a low emissivity, which can cause significant errors.

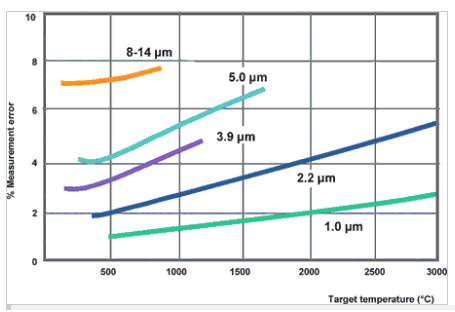

in such cases, it is important to use an instrument which measures infrared radiation at the particular wavelength and within the particular temperature range at which the metals have the highest possible emissivity. with most metals, the measurement error becomes more significant with increasing wavelength; hence, it is best to measure the ir in the lowest possible wavelength. figure 2 shows the % error for different wavelengths and temperatures if a 10% error is made in emissivity measurement [3].

figure 2. percentage error in temperature measurement for a 10% error in emissivity as a function of wavelength [3].

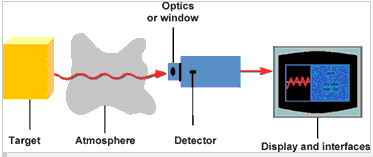

figure 3 shows the components of an infrared measuring device. the emitted radiation is collected by optics and passed to a detector.

figure 3. components of an ir measuring system [3].

there are two types of detectors used in ir: quantum detectors and thermal detectors. quantum detectors (photodiodes) interact directly with the impacting photons, resulting in an electrical signal. thermal detectors, on the other hand, change their temperature depending upon the impacting radiation. temperature change creates a voltage similar to a thermocouple. thermal detectors are much slower in the millisecond range due to self-heating, as compared to quantum detectors in the ns or μs range. among the detectors, thermopile has the least sensitivity to temperature and pbs has the greatest sensitivity.

most infrared thermometers have the ability to compensate for different emissivity values for different materials. in general, the higher the emissivity of an object, the easier it is to obtain an accurate temperature measurement using infrared. objects with very low emissivities (below 0.2) can be difficult applications. some polished, shiny metallic surfaces, such as aluminum, are so reflective in the infrared that accurate temperature measurements are not always possible.

there are five ways to determine the emissivity of a material to ensure accurate temperature measurements [4]:

- heat a sample of the material to a known temperature using a precise sensor, and measure the temperature using the ir instrument. then adjust the emissivity value to force the indicator to display the correct temperature.

- for relatively low temperatures (up to 500°c), a piece of masking tape, with an emissivity of 0.95, can be measured. then adjust the emissivity value to force the indicator to display the correct temperature of the material.

- for high temperature measurements, a hole (with a depth at least 6 times its diameter) can be drilled into the object. this hole acts as a blackbody with emissivity of 1.0. measure the temperature in the hole then adjust the emissivity to force the indicator to display the correct temperature of the material.

- if the material or a portion of it can be coated, a dull paint will have an emissivity of about 1.0. measure the temperature of the paint then adjust the emissivity to force the indicator to display the correct temperature.

- standardized emissivity values for most materials are available. these can be entered into the instrument to estimate the material’s emissivity value.

in principal, ir thermography is a viable technique for measuring surface temperatures. engineers must make sure that emissivity matching is done properly as discussed in this article. they also have to consider the cost of the unit and the range of spatial resolution for each particular application.

references:

1. www.wikepedia.org.

2. barron, r., principles of infrared thermometry, williamson corporation.

3. principles of infrared temperature measurement, sa instrumentation and control, august, 2006.

4. introduction to infrared thermometers, omega engineering.

|